Testing five phones a day is a task.

Testing five hundred a day is a system.

Businesses operating in refurbishment, wholesale, retail trade-in programs, service environments or resale marketplaces do not simply run diagnostics. They operate processing pipelines designed to handle volume while maintaining consistency and traceability.

At scale, the goal is not just speed. A scalable testing process must be:

- fast enough to support high throughput

- consistent enough to ensure repeatable grading

- traceable so outcomes can be audited

- trustworthy through certification and documentation

- scalable through batch processing and minimal manual touchpoints

- predictable so the cost per processed device remains stable

This article outlines a practical playbook for testing mobile devices at scale – covering workflow design, operational structure, exception handling, certification and the factors businesses should consider when selecting the right diagnostic platforms.

Why testing mobile devices at scale often breaks

Most high-volume testing operations do not struggle because technicians lack technical knowledge. The real issue is that their processes were originally designed for smaller volumes.

When a workflow that works for dozens of devices is applied to hundreds, inefficiencies quickly become visible. Devices begin to wait between stations. Locked devices are discovered late in the process. Technicians repeat steps unnecessarily and grading decisions vary between operators.

Over time, these problems compound. Teams end up diagnosing devices that cannot be resold, devices move back and forth between stations and return rates increase due to inconsistent grading.

The difference between a small testing setup and a scalable one is structure.

Effective operations rely on a progression of stages that gradually increase the level of effort invested in each device.

In practice, scaling requires a shift toward:

standardisation → prioritisation → automation → certification → measurement

The logic behind a high-volume testing workflow

At scale, testing should follow what operations teams often call investment logic. The amount of time spent evaluating a device should increase only after its viability has been confirmed.

A structured workflow typically progresses through several stages:

- Intake and device identification

- Basic functional verification

- Lock and status checks

- Automated diagnostics

- Data erasure and compliance steps

- Grading and valuation

- Routing to resale, repair or salvage streams

This sequence prevents situations where technicians spend time diagnosing a device only to discover later that it is locked or otherwise unsellable.

Another operational rule that becomes essential at scale is simple but powerful:

Scan to advance.

If a technician can move a device to the next step without scanning its identifier (IMEI, serial or barcode), traceability begins to break down. In high-volume environments, identity tracking is what allows teams to maintain control over hundreds or thousands of devices in motion.

Intake & identification

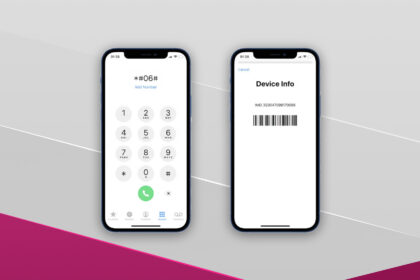

Every scalable workflow begins with device identity.

Before any testing begins, each device should be uniquely recorded and linked to its origin. This step establishes the traceability required to manage high volumes without confusion.

Typical intake information includes:

- the device IMEI or serial number

- an internal processing identifier

- the batch or vendor source

- the operator and timestamp associated with intake

Once devices begin moving through multiple testing stages, this identity layer becomes essential. Without it, tracking outcomes or resolving disputes becomes extremely difficult.

Managing device flow between stations

Even the best diagnostic platform cannot compensate for poor operational flow.

Large refurbishment operations treat devices as units moving through a system rather than isolated tasks. Devices progress through defined stations and each station has a clear responsibility.

In practice, efficient facilities follow a forward-moving layout similar to:

Intake → Check → Status Verification → Diagnostics → Erasure → Grading → Routing

Devices should rarely move backwards through the process unless routed through a dedicated exception path. Allowing uncontrolled backflow creates unnecessary handling, confusion over device status and increased processing delays.

Maintaining clear ownership between stages and limiting the number of devices waiting at each station helps preserve throughput.

Verifying basic device functionality

Before running deep diagnostics, technicians should confirm that the device demonstrates basic operational viability.

This typically includes verifying that the device powers on, charges correctly and has a usable display. Detecting severe faults at this stage prevents resources from being spent on devices that are already clearly destined for salvage or parts recovery.

This early filtering stage may seem simple, but it plays a critical role in protecting testing capacity when volumes are high.

Checking lock status and device eligibility

One of the most important steps in high-volume workflows is early status verification.

Devices that are activation-locked, enrolled in enterprise management systems or otherwise restricted may not be eligible for resale. Detecting these issues early ensures they can be routed appropriately rather than consuming testing time.

Typical checks include verifying:

- Activation Lock or similar account locks

- Google Factory Reset Protection (FRP)

- Mobile Device Management (MDM) enrollment

- IMEI Blacklist status where applicable

- Carrier restrictions

When these checks are performed early, operations avoid investing time in devices that cannot be sold.

Automated diagnostics as a decision layer

Once a device passes viability and status checks, automated diagnostics can begin.

At scale, diagnostics are not simply about identifying faults. They act as a decision engine that helps determine how a device should be handled next.

Automation ensures that testing remains consistent across hundreds or thousands of units. It also allows workflows to adapt dynamically, stopping tests early when critical faults are detected and routing devices toward repair or salvage paths.

By removing manual judgement from routine evaluations, automated diagnostics help maintain consistency across teams and reduce training complexity.

Scaling through parallel testing

Another defining characteristic of large-scale operations is parallel processing.

Testing devices sequentially creates a natural limit to throughput. Even with a skilled team, evaluating devices one at a time restricts how many units can be processed in a day.

Batch-based workflows allow multiple devices to be evaluated simultaneously. Technicians interact with groups of devices rather than individual units, monitoring progress across the batch while the system performs automated checks.

This shift from sequential testing to parallel processing is what allows operations to scale beyond manual limits.

Secure data erasure and compliance

Before devices can enter resale channels, data must be securely removed.

Certified erasure processes provide proof that data has been sanitised according to recognised standards. In resale environments, this documentation helps protect both the seller and the eventual buyer.

Reliable erasure workflows also link the outcome of the process to the device’s identity, ensuring that the erasure event can be verified later if required.

Turning device condition into market value

Testing alone does not determine a device’s resale value.

Grading does.

Grading translates the technical condition of a device into a market-ready classification. To remain reliable across large volumes, grading criteria must be standardised and applied consistently.

Most grading frameworks consider several factors:

- functional performance

- cosmetic condition

- battery health

- repair requirements

Standardising these criteria reduces subjective decisions and ensures that buyers receive devices that match the advertised grade.

Routing devices to their final destination

Once a device’s condition is known, it can be routed to its appropriate destination.

Depending on the outcome of diagnostics and grading, devices may move toward:

- resale-ready inventory

- repair or refurbishment streams

- parts recovery

- return or recycling channels

Effective routing ensures that effort is aligned with the device’s potential value.

Managing exceptions without disrupting the line

Not every device fits neatly into the standard workflow.

Devices that fail to boot, exhibit water damage or trigger lock alerts require special handling. Instead of allowing these cases to slow down the main pipeline, scalable operations isolate them into an exception lane.

This approach allows the primary workflow to maintain speed while specialists investigate edge cases separately.

Measuring operational performance

Once a structured workflow is in place, measurement becomes essential.

Monitoring operational metrics helps teams identify bottlenecks and refine processes over time. Common indicators include:

- devices processed per technician

- first-pass success rates

- retest frequency

- processing time per device

- return rates by grade category

By tracking these metrics, operations teams can continuously improve efficiency and predictability.

Understanding the real cost of testing at scale

When evaluating testing platforms, businesses often focus on subscription pricing. In reality, the largest costs usually come from inefficiencies in the workflow itself.

Time spent re-testing devices, inconsistent grading and slow routing decisions often have a larger impact on profitability than software pricing.

Different diagnostic platforms follow different pricing structures.

| Platform | Pricing Structure |

|---|---|

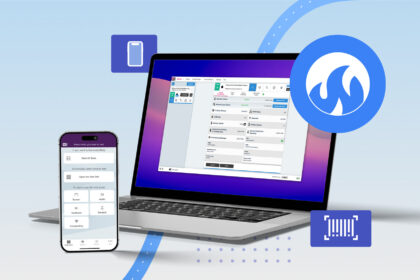

| M360 Diagnostics | Public pricing with volume-based scaling |

| PhoneCheck | Enterprise quote-based |

| NSYS Group | Enterprise quote-based |

| Blancco | Bundle / enterprise pricing |

| Blackbelt 360 | Contact-based pricing |

Why certification reports matter more than most people think

In resale markets, trust is critical.

Buyers often cannot directly verify the history or testing process behind a device. Certification reports provide structured documentation that helps establish confidence in the device’s condition and testing history.

Certification reports help you:

- reduce disputes

- reduce returns

- increase buyer confidence

- standardise your output at scale

- sell faster because trust friction is lower

Platforms that combine diagnostics with structured reporting help maintain consistency across large device volumes. For example, M360 integrates diagnostics, grading, erasure and reporting into a unified workflow designed for refurbishment operations.

Blueprint for getting started

Building a scalable testing environment does not happen overnight. Most operations evolve gradually as volume increases.

A practical starting point usually involves:

- standardising intake procedures

- implementing early lock checks

- automating diagnostics

- applying consistent grading rules

- separating exceptions from the main workflow

- monitoring operational metrics

Over time, these changes transform testing from a series of tasks into a structured system capable of supporting large device volumes.

FAQ: Testing mobile devices at scale

1. What does it mean to test mobile devices at scale?

It means running a standardised, barcode-driven workflow (intake → diagnostics → checks → certified erasure → grading → routing) with batch operations and audit-friendly reporting so hundreds or thousands of devices can be processed consistently.

2. What’s the minimum setup to test mobile devices at scale?

At minimum: a barcode scanner, reliable multi-port power, stable Wi-Fi near stations, device stands, a defined pass/repair/reject routing system and a professional diagnostics platform that supports batch testing and reporting.

3. How many devices can one technician test per hour?

It depends on your test depth. Triage flows can be significantly faster than full grading + certification. The biggest drivers are standardised station layout, batch operations and how you handle exceptions like locked or non-booting devices without blocking the line.

4. Do I really need certified data erasure? Can’t I just factory reset?

A factory reset is not proof of secure deletion. Certified erasure provides logs and certificates tied to IMEI/serial and an audit trail showing who performed the action and when. This documentation is often required in resale and enterprise environments.

5. How do we handle iCloud/Activation Lock and Google FRP at scale?

Create an exception lane. Detect locks early, remove those devices from the main testing line immediately and route them to a dedicated process for credential removal, MDM release, return to seller or repair escalation.

6. What reports should we generate for resale?

Generate a device report including diagnostics results, grading outcome, key checks (where applicable) and certified data-erasure documentation. This improves buyer confidence and reduces return rates.

7. Is M360’s data erasure automatic?

M360’s erasure process is manually triggered by the operator as part of the workflow. This ensures deliberate control, traceability and documented execution.

8. How should we compare diagnostic platforms beyond price?

Compare batch capabilities, device coverage, depth of testing, lock detection, reporting/certification features, integrations (API/export), training time and total operational cost (retests, returns, queue time), not just subscription price.